The whole GenAI wave has ushered in a series of discussions. Sooner or later, it generally starts revolving around productivity - a lot. But there is a subtle point that much of the discussion misses regarding productivity. How and where is GenAI being used? Is it being used as a method (means) or is it embedded into the product itself (ends)?

Let's first define what is what.

Means are simple. Let's assume you are building a product feature. A good, solid software feature. The feature can be anything. Adding a search to your website, redesigning the old homepage, or creating a new category in the e-commerce app. The feature adds business value. But it doesn't use any GenAI. In this case, we are using GenAI just to write the code. This is treating GenAI as a means. It is just a tool in the background. The user would never know if the feature was built using Cursor or Claude Code.

What is GenAI as an end product? In this scenario, you are not only using GenAI to write the code, but the core product offering also has an embedded GenAI feature. For example, it can be a GenAI-powered data analyst, a document generator that generates documents for clinical trials, or a text-to-SQL converter. For these use cases, you are not merely using language models for coding. The core offering itself includes these capabilities as the end product.

Let me give you a concrete example. At my previous organisation, we built a document generation tool for scientific writers. The underlying feature was a language model stitching together structured data from different specified sources and free-text summaries into a draft document. A researcher would review and edit, but the first draft came from the model. That is GenAI as the ends. The model's output was the product. If it hallucinated a dosage or misread a table, that was not a bug in the background. That was the product failing. And it used to happen.

When we are using GenAI as a means, and when we are deep into the productivity debate, there is an implicit assumption that LLMs or SLMs can produce many lines of code, very quickly. That is absolutely true. And for most cases, the output remains unchanged (the feature would have been there even without GenAI), so there can be a productivity bump, if it is being used correctly.

But for the rest, it will hardly be the case. What I see, read, and hear is that people treat everything as GenAI as the means, even when the company or the team is building AI-native features. Shipping a text-to-SQL product is not the same as shipping a search bar.

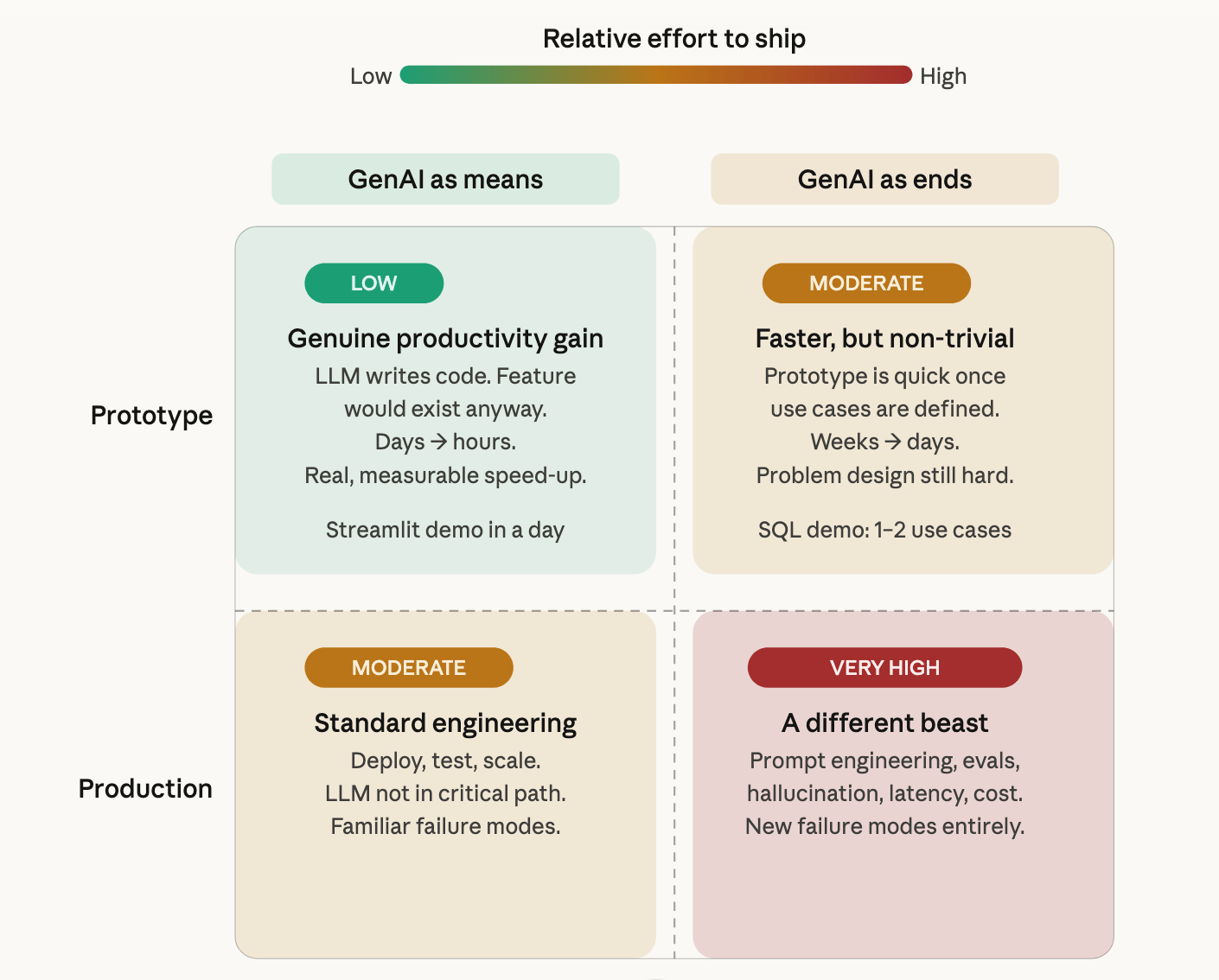

GenAI as means vs ends

Once you start looking, this misframing shows up everywhere. A LinkedIn post celebrating "we shipped 10x faster" rarely specifies what was shipped. Similarly, productivity metrics like lines of code or tickets closed don't distinguish between a team that used Copilot to build a payment flow and a team that shipped an AI-native feature on top of an LLM. The numbers look the same. The problems are not.

On this, another important note is prototyping and production.

Prototyping has become easier for both cases. For sure. If I had to give a demo to stakeholders using Streamlit or Shiny, it would take at least a week to build the minimum viable product. Lots of back and forth on Stack Overflow, Googling and whatnot. Now it often takes a day of intense prompting. Most companies have tie-ups with different organisations, so you get almost unlimited tokens and a huge context window.

Similarly, even when your core product offering is GenAI-embedded, you will have it easier to prototype. One or two solid SQL use cases, and you are done building the prototype.

Productionising is a different beast altogether. And the degree of difficulty increases here, too. When the core offering is a GenAI-driven feature, the relative increase in difficulty will be very, very high compared to when you are using it just as a coding agent. You now have a model that hallucinates, behaves differently across prompt variations, and has different token length requirements for different use cases. You need better evaluation frameworks to gauge the model's performance. You need to think about what happens when the model is confidently wrong and a user acts on it. None of this has a clean engineering solution (yet). Most of it does not show up in the prototype at all.

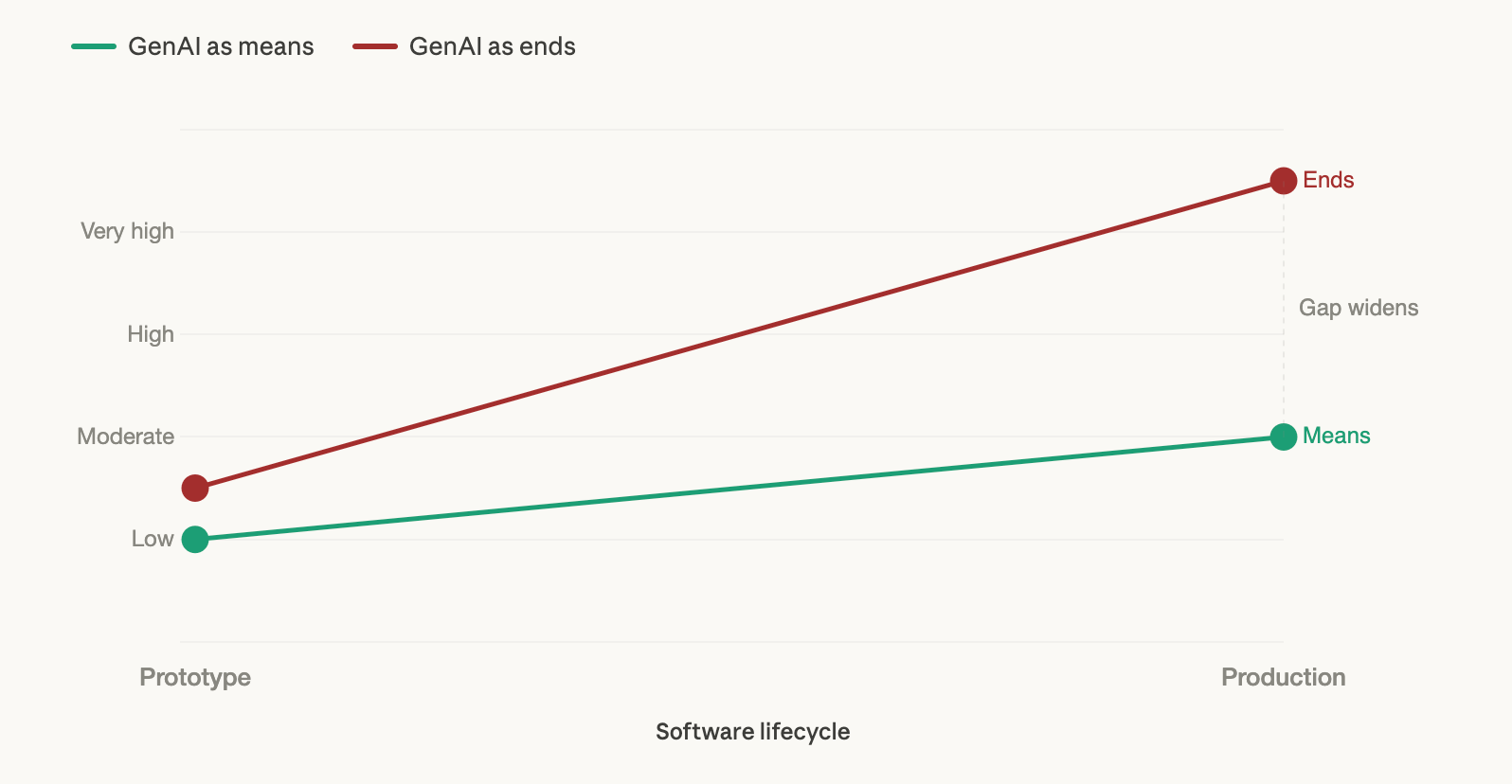

How does the degree of difficulty change?

The slope chart above is not precise. The numbers are illustrative. But the shape of it is right: the two lines start close and end very far apart.

The next time someone shares a productivity stat about GenAI, it is worth pausing for a second. Which kind of productivity? A coding assistant helping ship a search bar is a real gain, no argument there. But if the product itself runs on a language model, the prototype was never the hard part. It has never been easier to build one. That is also, unfortunately, the easiest part of the whole lifecycle.

Kant warned against treating ends as merely means. He was talking about people. But the pattern, as it turns out, is not limited to philosophy.

Enjoyed the grain? Subscribe for more takes on data, products, and the decisions in between.

Share it with someone!